I develop creative tools for interactive audio-visual experiences using machine learning models, software and hardware platforms such as C++, C#, MaxMSP/Jitter and Pure Data for Procedural Audio, Unity3D, Oculus Rift, HTC Vive and Google Cardboard, Below you can find a summary of my projects.

WaveRiding: Machine Learning controlled WaveTable synthesis in Max/Msp via Wekinator. Download the full package here.

Delay/Chorus AU: An Audio Unity (AU) Delay/Chorus audio effect plug-in developed in C++. Download and installation info here.

MICTIC “The world’s first AUDIO AUGMENTED REALITY wearable that truly immerses you in music”. Additional audio recordings, editing and audio software programming by Franky Redente.

Audio/Visual reactive Max For live device: This patch is based on Andrew Benson SoundLump code

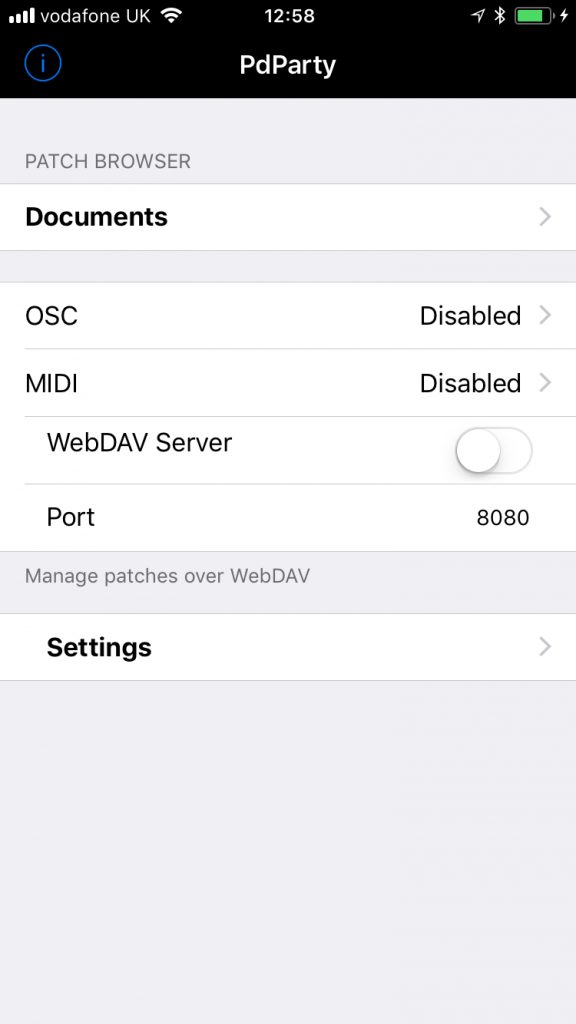

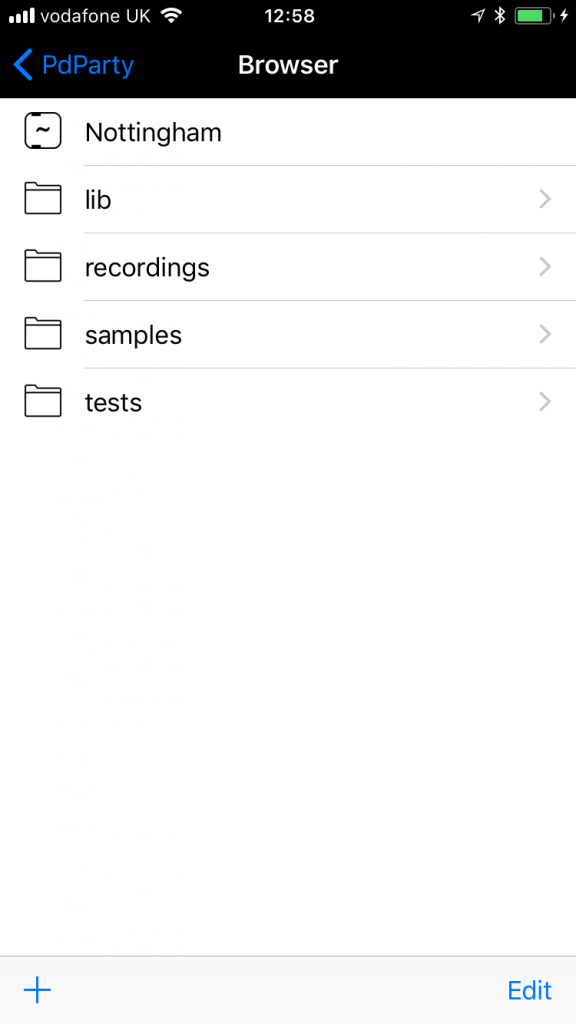

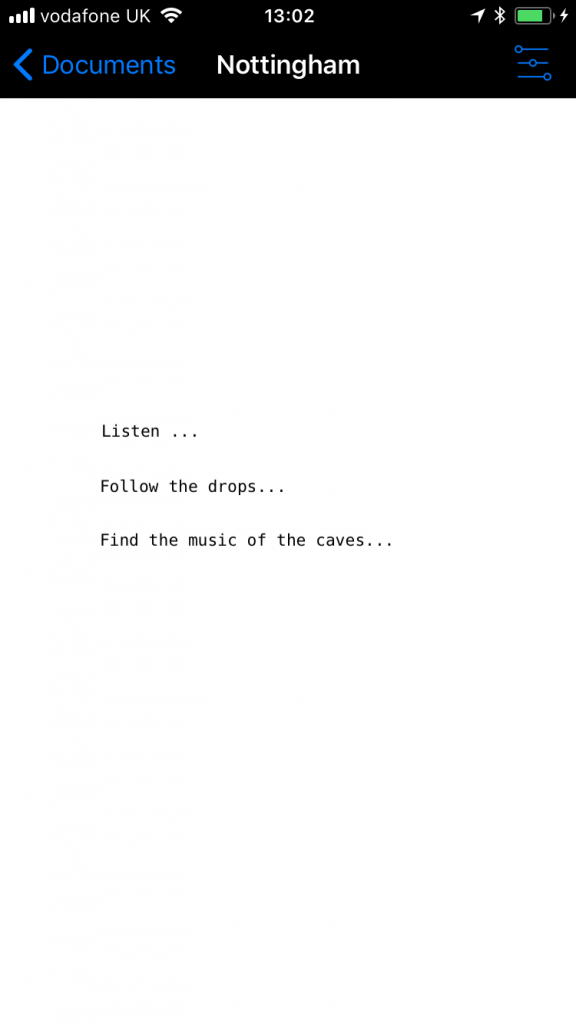

GPS driven adaptive music experience: A portable audio adaptive music composition based on Nottingham Caves’ GPS coordinates. Designed and Programmed in Pure Data with Robert Thomas who also composed the music. Commissioned by Adrian Hazzard of Nottingham University Mixed Reality Lab

Procedural-Interactive Granular Synthesis in Virtual Reality using HTC VIVE, Unity3D and Pure Data: This research project was created together with Robert Thomas, who presented it at the V&A during the “Opera: Passion, Power and Politics”

Google Cardboard-Immersive audio-visual experience for web and mobile VR: 3D Graphics, Sound Design and Mix by Franky Redente. Music by Franky Redente and Cristian Duka

Procedural FM Synthesis in VR – Interactive FM Synthesiser in Virtual reality: This project explores procedural audio techniques and real-time sound synthesis via user interaction using a Leapmotion and the Oculus Rift.

Interactive Spatial Audio in Virtual Reality: Leapmotion, Unity3D, Oculus Rift.

Interactive Web FM Synthesis using PureData and Enzien Audio.

GEM SOUND OBJECTS: Audio-visual interactive musical instrument designed and created in Pure Data using the GEM library (Graphics Environment for Multimedia), sound synthesis and user interaction via midi controls changes (CC).

OJECT SONORES: Reseach project on how we relate to the gestural control of sound objects in Virtual Reality with Binaural Audio.